Teradata Corp: From Data Warehousing Pioneer to Cloud Transformation

I. Introduction & Episode Roadmap

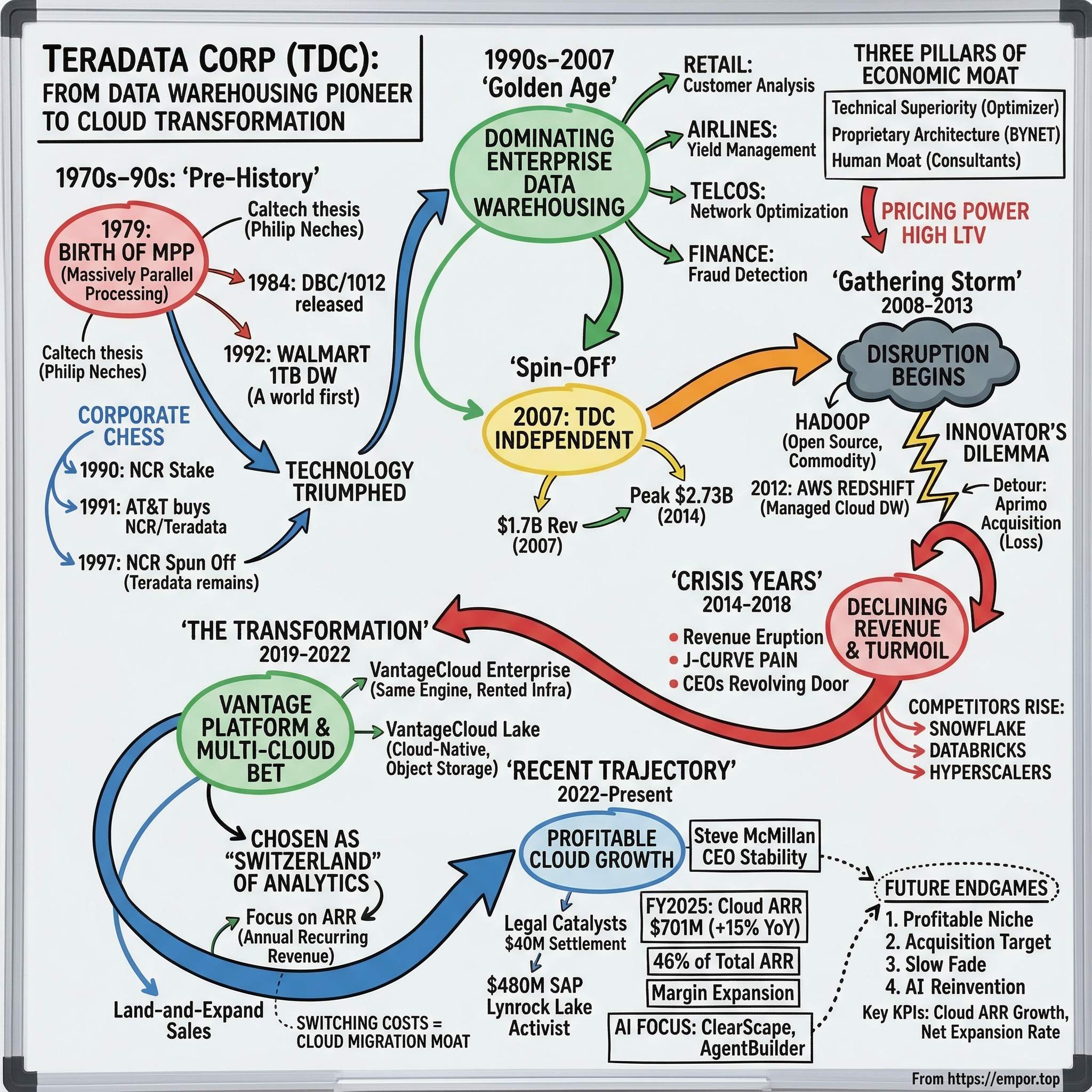

Picture this: it is 1992, and somewhere inside Walmart's headquarters in Bentonville, Arkansas, a machine is doing something that no computer on Earth has ever done before. It is ingesting, sorting, and analyzing one terabyte of point-of-sale data across thousands of stores, in near-real time. That machine is a Teradata system. The year is significant because, in 1992, one terabyte was so much data that most people could not even conceptualize it. The internet barely existed. Google would not be founded for another six years. And yet here was a company born from a Caltech graduate thesis, quietly building the infrastructure that would allow the world's largest retailer to understand what its customers were buying, when, where, and why.

Teradata Corporation did not just participate in the data warehousing revolution. It invented it. For two decades, if a Fortune 500 company needed to ask complex questions of massive datasets, there was only one serious answer: Teradata. Banks used it for risk calculations. Telcos used it for network optimization. Airlines used it for yield management. The company's name literally meant "tera" (trillions) of "data" — an audacious naming choice in an era when most databases measured capacity in megabytes.

The central question of this episode is one of the richest in enterprise technology: How did the undisputed king of data warehousing nearly miss the cloud revolution — and is it recovering? The Teradata story is a masterclass in the innovator's dilemma, business model transformation, and the brutal physics of enterprise technology disruption. The company went from a market capitalization exceeding thirteen billion dollars in 2012 to roughly three billion dollars today. Revenue peaked at $2.73 billion in 2014 and has declined to $1.66 billion. Three different CEOs have taken the helm in the past seven years.

And yet the patient may not be dead. Cloud annual recurring revenue reached $701 million at the end of 2025, growing at fifteen percent year-over-year. A $480 million legal settlement with SAP landed in February 2026. An activist investor just joined the board. The stock surged more than forty percent in a single day after a Q4 2025 earnings beat.

This is the story of a company that built something genuinely remarkable, watched the world change around it, nearly drowned, and is now swimming for shore. Whether it reaches land is one of the most interesting open questions in enterprise technology. Along the way, it offers a lens into questions that every technology investor and founder must grapple with: What happens when your moat erodes? How do you manage a business model transition without killing the patient? And what does it actually look like when a company tries to reinvent itself from the inside out?

II. The Pre-History: NCR, AT&T, and the Birth of Teradata (1979–1990s)

In 1979, in a modest office in Brentwood, California, a group of seven men incorporated a company with an impossibly ambitious name. Among the founders were Jack Shemer, Walter Muir, and the intellectual architect of the entire venture: Philip M. Neches, a Caltech alumnus whose doctoral thesis explored a radical concept — what if you could harness dozens of microprocessors, each with its own dedicated disk drive, working in parallel to answer questions that no single computer could handle alone?

The idea was called massively parallel processing, or MPP, and the simplest way to understand it is through an analogy. Imagine you need to count every red marble in a warehouse full of jars. The traditional approach — the IBM mainframe approach — was to hire one very fast worker and have them open jars one by one. Neches's insight was: why not hire a hundred workers, give each of them a section of the warehouse, and have them count simultaneously? The total job gets done in a fraction of the time. That is massively parallel processing. Every worker (processor) handles its own data independently, and their answers are combined at the end.

Citibank's Advanced Technology Group served as both intellectual collaborator and first customer when Teradata released the DBC/1012 database machine in 1984. It was a back-end system for mainframe computers, but it harnessed Intel microprocessors in a shared-nothing architecture that was genuinely revolutionary. Each processor owned its data. No processor had to wait for another. And performance scaled linearly — double the processors, roughly double the speed. In an era when IBM's mainframe-based DB2 hit a hard ceiling on data volume, this was a breakthrough. The "shared-nothing" design was key: by ensuring that no two processors ever needed to access the same piece of data simultaneously, Teradata eliminated the contention and bottlenecks that plagued traditional shared-disk database designs. It was an elegant solution to a fundamental computer science problem, and it remains the architectural foundation of Teradata's systems four decades later.

The company went public in the mid-1980s and began attracting the kinds of customers that had data problems too large for anything else — retailers tracking point-of-sale transactions, telecom companies analyzing call detail records, and financial institutions processing millions of daily transactions.

Then came the corporate chess game that would define Teradata's organizational identity for twenty-five years. NCR Corporation, the legacy cash register and computing company, acquired a nine percent equity stake in Teradata in 1990 and established a joint development agreement. By December 1991, NCR announced a full acquisition, exchanging each Teradata share for $30.25 worth of AT&T common stock — valued at approximately $250 million. The deal closed on February 28, 1992, and Teradata became a division of NCR.

Here is where it gets complicated. Just three months before this acquisition, AT&T had bought NCR itself for $7.4 billion. So Teradata was now a division of a division of a telephone company. The AT&T era that followed was marked by strategic confusion of the highest order. In 1994, NCR's name was changed to AT&T Global Information Solutions — a widely ridiculed move that erased decades of brand equity. By 1996, the experiment was pronounced dead and the name reverted to NCR. On January 1, 1997, AT&T spun off NCR as an independent company once more, and Teradata remained inside, now the crown jewel of a company that sold point-of-sale terminals and ATMs.

Through all this corporate shuffling, something remarkable happened: Teradata just kept winning. The technology was too good and the customer need too urgent for organizational dysfunction to kill it.

While AT&T's executives debated corporate structure and brand names in conference rooms, Teradata's engineers and sales teams were solving real problems for real customers — problems that grew larger and more valuable every year as businesses generated exponentially more data. The telecommunications industry alone was producing call detail records at a rate that would have been unimaginable a decade earlier. Retail chains were scanning millions of items per day across thousands of locations. Banks were processing credit card transactions at speeds that required analytical capabilities far beyond what traditional mainframes could deliver. That 1992 Walmart installation — the world's first terabyte-scale data warehouse — expanded relentlessly. By 1997, the Walmart system had tripled from 7.5 terabytes to over 24 terabytes, handling more than 50,000 queries per week, making it the world's largest commercial data warehouse. The lesson was clear: when you solve a problem that nobody else can solve, corporate ownership is almost irrelevant.

But the fact that Teradata thrived despite, not because of, its corporate parents planted the seed for what would come next.

III. The Golden Age: Dominating Enterprise Data Warehousing (1990s–2007)

Walk into the IT department of any Fortune 100 company in the year 2005 and ask about their analytics infrastructure, and odds were overwhelming that someone would mention Teradata. The company did not just occupy a market position — it defined the category. Enterprise data warehousing was Teradata, and Teradata was enterprise data warehousing.

The customer roster read like a who's who of global business. Walmart's system eventually grew to an estimated thirty-plus petabytes, capturing point-of-sale data every second from five thousand locations across six countries. Target, another Teradata stalwart, used the platform for the kind of customer behavior analysis that would later become famous (and controversial) when analysts could predict major life events from purchasing patterns.

Airlines used Teradata to optimize seat pricing — the field known as yield management — making decisions worth hundreds of millions of dollars based on real-time demand patterns. Financial institutions used it for fraud detection, running complex pattern-matching algorithms across billions of transactions to identify suspicious activity in near-real time. Telecommunications companies used it to analyze call detail records, optimize network capacity, and predict customer churn. In each of these industries, the common thread was the same: the data volumes were too large, the queries too complex, and the business stakes too high for anything other than Teradata.

The economic moat during this golden age was formidable, built on three reinforcing pillars. First was raw technical superiority. Teradata's query optimizer — the engine that decides how to execute a database query most efficiently — was years ahead of anything Oracle or IBM could offer for complex, mixed-workload analytics. Think of a query optimizer as a master chess player planning many moves ahead; Teradata's optimizer could look further ahead and adapt its strategy mid-game in a way competitors simply could not match.

Second was a deeply proprietary architecture. Teradata ran on purpose-built hardware with a custom networking layer called BYNET — a high-speed interconnect that coordinated communication between processors with latency so low it gave Teradata a measurable performance advantage. Customers did not just buy software; they bought purpose-built appliances that locked them into the Teradata ecosystem.

Third was the human moat. Teradata invested heavily in professional services, building teams of consultants who embedded themselves inside client organizations for years. These consultants accumulated irreplaceable institutional knowledge about each customer's data models, business logic, and analytical workflows. Replacing Teradata meant not just replacing technology but replacing decades of accumulated expertise.

The business model was extraordinarily profitable. High-margin hardware appliance sales provided the anchor, complemented by perpetual software licenses that generated revenue on day one, and then followed by maintenance contracts and consulting services that created recurring revenue streams. Customer lifetime value was enormous because once a company built its analytical infrastructure on Teradata, switching was essentially unthinkable. Migration would cost tens of millions of dollars, take years, and risk breaking mission-critical analytical pipelines.

Oracle and IBM tried to compete, of course. Oracle's Exadata appliance and IBM's Netezza acquisition were both attempts to challenge Teradata in the high-performance analytics space. But neither could match Teradata's workload management capabilities — the ability to run thousands of concurrent queries of vastly different complexity without any single query starving the others for resources. This is a problem that sounds simple but is devilishly hard in practice. Imagine a restaurant kitchen that needs to simultaneously prepare a five-minute appetizer and a three-hour roast, multiplied by a thousand tables, all while ensuring every dish arrives on time. That was Teradata's specialty.

The pricing power during this era was remarkable. Because no competitor could match Teradata's performance for mission-critical analytics at scale, enterprises had limited negotiating leverage. A Fortune 500 bank considering a Teradata deployment was not comparing Teradata to Oracle on price — it was comparing the cost of a Teradata system to the cost of not having answers to business questions that were worth billions of dollars. When the alternative is flying blind on risk calculations or fraud detection, a ten-million-dollar database investment looks like a bargain. This dynamic produced customer lifetime values that were extraordinary by any standard in enterprise technology — single customers spending hundreds of millions of dollars over multi-decade relationships.

The NCR relationship, meanwhile, had become more baggage than asset. NCR was primarily a point-of-sale terminals and ATM company. Its sales channels, customer base, and strategic priorities had little overlap with Teradata's enterprise analytics business. Teradata's management team increasingly felt constrained — unable to set its own investment priorities, pursue its own acquisitions, or build its own brand in the market. Analysts covering NCR struggled with the conglomerate structure, often undervaluing Teradata because it was bundled with a lower-growth hardware business.

By the time NCR's board began seriously considering a spin-off in 2006, the division was contributing only about twenty-five percent of NCR's revenues but generating over sixty percent of its operating income. Teradata was the diamond locked inside a hardware company's safe. The question was no longer whether it should be freed, but when.

IV. The Spin-Off: Independence and New Challenges (2007)

On January 5, 2007, NCR's Board of Directors approved what many analysts had been calling for years: the separation of Teradata into its own publicly traded company. The spin-off completed on October 1, 2007, structured as a tax-free stock dividend — one share of Teradata common stock for each share of NCR common stock held. When Teradata began trading on the New York Stock Exchange under the ticker TDC that morning, the opening price was approximately twenty-seven dollars per share. The market valued the newly independent company at roughly $4.6 billion.

The financial profile was impressive. Revenue had reached $1.70 billion. Operating margins were strong enough that Teradata represented over half of the total value allocated to the two post-spin entities — a cost basis allocation of 52.37 percent to Teradata versus 47.63 percent to NCR, despite Teradata being the smaller company by revenue. The customer base included hundreds of the world's largest enterprises, and the retention rate was extraordinary.

Mike Koehler stepped into the CEO role for the newly independent company. Koehler had spent his career in enterprise technology sales and knew Teradata's customers intimately. His job, as the market saw it, was straightforward: maintain the golden goose. Keep the big enterprise relationships humming, continue the slow-and-steady growth trajectory, and let the stock rerate as a pure-play analytics company rather than a division buried inside NCR.

And for several years, the plan worked spectacularly. Revenue climbed from $1.70 billion in 2007 to $1.76 billion in 2008, then accelerated dramatically — $1.94 billion in 2010, $2.36 billion in 2011, $2.67 billion in 2012. The stock price followed, soaring from twenty-seven dollars at the spin-off to an all-time high of nearly eighty-one dollars intraday in September 2012. Teradata's market capitalization briefly exceeded thirteen billion dollars. The newly liberated company appeared to be proving that independence was exactly what the business needed.

Wall Street loved the story. Teradata was a rare thing in enterprise technology — a pure-play company with deep domain expertise, predictable revenue, and sticky customers. Analysts used words like "mission-critical" and "best-of-breed" in their research notes. The company's investor day presentations featured case studies from household-name companies, each one reinforcing the narrative that Teradata was indispensable. Koehler used some of the company's prodigious cash flow for share buybacks, further boosting the stock. It was a golden era for Teradata shareholders.

But beneath the surface of those impressive numbers, a storm was gathering that Koehler and his team were either unable or unwilling to see. The data warehousing market that Teradata had invented was about to be disrupted by forces that made the company's greatest strengths — proprietary hardware, perpetual licenses, and deep on-premises integration — into existential liabilities. The very things that created Teradata's golden age would nearly destroy it.

V. The Gathering Storm: Hadoop, Cloud, and Disruption (2008–2013)

In the fall of 2006, two engineers at Yahoo named Doug Cutting and Mike Cafarella released the first production version of Hadoop, an open-source framework that could store and process massive datasets across clusters of commodity servers. The technology was inspired by Google's internal MapReduce paper, and it promised something that sounded almost too good to be true: the ability to handle enormous data volumes at a fraction of Teradata's cost.

The Hadoop pitch to enterprise customers was devastating in its simplicity. Instead of spending millions on a proprietary Teradata appliance, a company could buy a hundred cheap commodity servers, install free open-source software, and start processing data at massive scale. It was not as fast or as polished as Teradata for structured analytics, but it was orders of magnitude cheaper — and it could handle unstructured data (text, images, logs) that Teradata was never designed for.

By 2010, "Big Data" had become the most over-hyped phrase in enterprise technology. Every conference, every vendor pitch, every consulting firm white paper was about Big Data, and the subtext was always the same: the old way of doing things — meaning Teradata — was expensive, rigid, and outdated. The new way was open-source, commodity-priced, and flexible. Venture capitalists poured billions into Hadoop ecosystem startups like Cloudera, Hortonworks, and MapR. The phrase "data lake" entered the lexicon as the supposed successor to the "data warehouse."

Then came the truly existential threat. On November 28, 2012, Amazon Web Services launched Redshift, a fully managed, cloud-based data warehousing service. The significance cannot be overstated. For the first time, a company could get a petabyte-scale analytical database without buying a single piece of hardware, without hiring a single database administrator, and without writing a single check to Teradata. The pricing was aggressive — starting at a fraction of Teradata's per-terabyte cost. Google followed with BigQuery, and Microsoft with Azure SQL Data Warehouse.

Inside Teradata's offices, the debates were fierce. The sales organization, structured around multi-million-dollar appliance deals with long sales cycles, viewed cloud as a direct threat to their compensation. The engineering teams, steeped in twenty-five years of hardware-optimized database design, questioned whether cloud infrastructure could ever match purpose-built appliances for performance.

And the finance team watched nervously as the company's entire business model — high-margin hardware, perpetual licenses, and lucrative maintenance contracts — appeared increasingly misaligned with where the market was heading. The problem was not that anyone at Teradata was stupid or oblivious. The problem was structural: every person in the organization had rational, self-interested reasons to resist the change that the market demanded. This is the innovator's dilemma at its most insidious — not a failure of intelligence, but a failure of incentive alignment.

Teradata's response to Hadoop was reasonable but ultimately insufficient. The company proposed a "Unified Data Architecture" that positioned Hadoop as complementary to Teradata rather than competitive. The 2011 acquisition of Aster Data for $263 million gave Teradata MapReduce capabilities. A partnership with Hortonworks followed. The 2014 acquisition of Think Big Analytics added Hadoop consulting expertise. The logic was sound — Hadoop for cheap unstructured storage, Teradata for high-performance structured analytics — but it missed the larger point. The market narrative had shifted. Customers were not asking "How do I use Hadoop alongside Teradata?" They were asking "Do I still need Teradata at all?"

The financial impact began showing up in the numbers by 2013. Revenue growth stalled at $2.69 billion, up less than one percent from the prior year, after years of double-digit expansion. The growth engine that had powered the stock from twenty-seven to eighty-one dollars was sputtering. More worryingly, the nature of customer conversations was changing. CIOs who once treated Teradata as an unquestioned line item in the IT budget were now asking procurement teams to evaluate alternatives. The phrase "cloud-first" was entering enterprise technology strategy documents, and Teradata had no cloud offering.

Meanwhile, Koehler made a costly strategic detour. The 2010 acquisition of Aprimo, a marketing applications company, for approximately $525 million was intended to diversify Teradata beyond core data warehousing. The logic was that combining marketing automation with data analytics would create a differentiated platform. In practice, Aprimo was a distraction from the core business at the worst possible time — pulling engineering resources, management attention, and capital away from the cloud pivot that the company desperately needed. The investment would eventually be written down by $340 million and sold for roughly $90 million in 2016, destroying nearly half a billion dollars of shareholder value.

The cultural challenge ran deeper than strategy. Teradata was a hardware company at its core. Its engineers designed physical appliances. Its salespeople sold boxes. Its services teams installed and maintained on-premises systems. The entire organizational DNA was built for a world where customers bought equipment, installed it in their data centers, and maintained it for a decade. Asking that organization to embrace a model where infrastructure was rented by the hour and software was delivered as a service was like asking a luxury car manufacturer to start building bicycles. Not impossible, but requiring a fundamental transformation of identity.

Consider the sales compensation structure alone. In the appliance era, a Teradata salesperson closing a single large deal might earn a commission worth hundreds of thousands of dollars — or more — in a single quarter. In a subscription model, the same customer relationship generates a smaller initial payment spread over multiple years, with commission payments similarly distributed. Aligning sales incentives with the new economic reality required not just changing comp plans but changing the kind of person who wanted to sell for Teradata. The hunters who thrived on big-game appliance deals were temperamentally different from the farmers who excel at land-and-expand cloud motions. This human dimension of business model transformation is often underestimated by analysts and investors who focus on the financial mechanics.

By the end of 2013, the storm clouds were unmistakable. Revenue had plateaued. The competitive narrative had shifted decisively toward cloud. And Teradata's most valuable asset — its installed base of large enterprise customers — was becoming a defensive position rather than a growth platform. The golden age was over.

VI. The Crisis Years: Declining Revenue and Leadership Turmoil (2014–2018)

The year 2014 was the last year Teradata would see revenue growth for a long time. The $2.73 billion mark represented a peak that the company has not approached since and, barring a dramatic transformation, may never approach again. What followed was one of the most painful declines in enterprise technology history — not the sudden collapse of a startup, but the slow, grinding erosion of a dominant franchise.

Revenue fell to $2.53 billion in 2015, then $2.32 billion in 2016, then $2.16 billion in 2017. Each year, the story was the same: on-premises appliance sales declining faster than new subscription and cloud revenue could replace them. Perpetual license revenue, once the engine of profitability, was shrinking as customers either deferred purchases or began evaluating cloud alternatives. Consulting services revenue declined as fewer customers were deploying new on-premises systems.

It was the classic J-curve of a business model transition — the old revenue falling faster than the new revenue rising — except that Teradata had started the transition late, making the curve steeper and more painful than it needed to be. Companies like Adobe and Microsoft, which began their subscription transitions earlier and with more conviction, managed to compress the painful trough into two or three years. Teradata's trough would stretch for nearly a decade.

On May 5, 2016, Mike Koehler stepped down as CEO. His legacy was mixed. He had successfully led the spin-off from NCR and grown revenue by sixty percent over his first six years. But he had also presided over the Aprimo debacle and, more critically, failed to pivot the company toward cloud and subscription models with the urgency the market demanded. Victor Lund, a seasoned technology executive with experience at American Management Systems and Harte-Hanks, took over initially as executive chairman with CEO responsibilities.

Lund was a pragmatist, not a visionary — and at that moment, pragmatism was arguably what the company needed. His most important contribution was acknowledging the problem clearly and beginning the subscription transition in earnest. Teradata introduced a consistent subscription-based licensing approach that could work on-premises or in the cloud, responding to customer feedback that the company's pricing had become bewilderingly complex. The IntelliCloud managed cloud service debuted, offering Teradata's analytical power in a hosted environment.

But stabilization was not enough. Outside Teradata's walls, the competitive landscape was reshaping itself at warp speed. Snowflake, founded in 2012 by three former Oracle engineers, had launched its cloud-native data warehouse in 2015 and was growing explosively. Snowflake's architecture was revolutionary: it fully separated storage from compute, allowing customers to scale each independently and pay only for what they used. Amazon Redshift was capturing cloud-first customers by the thousands. Google BigQuery offered a serverless model where customers paid per query with zero infrastructure management. Databricks, built on Apache Spark, was emerging as the data engineering platform of choice.

Each of these competitors had something Teradata lacked: a cloud-native architecture designed from the ground up for the economics and operational model of public cloud. Teradata was trying to adapt a decades-old on-premises architecture to the cloud, while competitors had been born there.

The stock price reflected the agony. From the 2012 high of nearly eighty-one dollars, TDC shares declined to the mid-twenties by the time Koehler left, then continued falling. By late 2018, the stock was trading in the mid-thirties — still above its worst levels, but representing a destruction of roughly sixty percent of peak market value.

Activist investor JANA Partners entered the picture in February 2018, disclosing a 7.3 percent stake. JANA's involvement was the market's way of saying: the current trajectory is unacceptable. A cooperation agreement in October 2018 placed two JANA-recommended directors on the board, adding external pressure for more aggressive action.

In January 2019, the board made a bold move, appointing Oliver Ratzesberger as CEO. Ratzesberger was Teradata's Chief Operating Officer and represented the kind of leader the company arguably needed years earlier — a technologist with deep analytics experience who had previously led the expansion of analytics at eBay. He co-authored "The Sentient Enterprise," a book about the evolution of data-driven decision making that made The Wall Street Journal bestseller list. He spoke passionately about Teradata's need to reinvent itself, telling audiences that the company had to rebrand and reposition: "There's a new company in town."

Ratzesberger launched Teradata Vantage, a reimagined analytics platform that combined the traditional SQL engine with open-source languages like Python and R. The vision was compelling — transform Teradata from a data warehousing appliance vendor into a cloud-agnostic analytics platform. But vision alone was not enough. After disappointing financial results and a sharp stock decline, Ratzesberger departed in November 2019, just eleven months after taking the CEO role. Victor Lund was called back as interim CEO on the same day. It was the kind of leadership revolving door that demoralizes employees, confuses customers, and terrifies investors.

What went wrong? In retrospect, the diagnosis was straightforward, even if the cure was not. Teradata had fallen into the classic trap of listening too carefully to its best customers. Those customers — the biggest banks, the largest retailers — kept telling Teradata they wanted better on-premises performance, more features in the existing appliance, tighter integration with their data centers. And Teradata delivered exactly what those customers asked for. The problem was that those same customers were also quietly spinning up Amazon Redshift clusters for new workloads, experimenting with Snowflake for departmental analytics, and building data lakes on cloud storage. By the time they told Teradata they were considering a full migration away from on-premises, Teradata had no credible cloud offering to retain them.

The balance sheet, while not in crisis, showed the strain. Revenue had fallen by roughly twenty percent from peak. Operating expenses had been cut, but not fast enough to preserve margins. R&D spending, the lifeblood of any technology company, was caught in a squeeze — the company needed to invest more in cloud while revenues from the legacy business were shrinking. Cash flow remained positive, a testament to the durability of maintenance contracts, but the trajectory was unmistakable.

The honest assessment, one that several Teradata executives have acknowledged publicly, was brutally simple: the company was late to cloud. Not a little late. Years late. And in technology, being years late to a paradigm shift can be a death sentence.

VII. The Transformation: Vantage and Multi-Cloud Bet (2019–2022)

On June 8, 2020, Steve McMillan walked into the Teradata CEO office — virtually, given the pandemic — carrying a resume that read like a playbook for exactly this moment. He had spent nearly twenty years at IBM in increasingly senior leadership roles, then served as Senior Vice President of Customer Success and Managed Cloud Services at Oracle, where he guided Oracle's own painful transformation from traditional hosting to cloud offerings. Most recently, he had been Executive Vice President of Global Services at F5 Networks. He had, in other words, seen cloud transformations from the inside at two of the world's largest enterprise technology companies. He knew what worked, what failed, and where the landmines were buried.

McMillan inherited a company in worse shape than the numbers suggested. Revenue was $1.84 billion and falling. The stock was hovering around twenty dollars — less than a quarter of its all-time high. Three different people had held the CEO title in the past four years. Employee morale was fragile, with talent defecting to Snowflake, Databricks, and the hyperscalers, where stock options held more promise than Teradata's declining equity. Customers were confused about the strategic direction. Reports circulated of major accounts — including names like Apple, eBay, Bank of America, and General Motors — either leaving Teradata or actively planning exits to cloud-native alternatives. And then there was COVID-19, which had just sent the global economy into freefall.

But McMillan also inherited something valuable: the Vantage platform that Ratzesberger had launched. Rather than scrapping his predecessor's vision and starting over — a temptation many new CEOs cannot resist — McMillan doubled down on the cloud-agnostic, multi-cloud strategy and focused on execution. The Vantage architecture was reimagined into two distinct offerings. VantageCloud Enterprise maintained the traditional Teradata MPP architecture but replaced physical nodes with virtual machines on AWS, Azure, or Google Cloud — essentially the same high-performance engine running on rented infrastructure rather than owned hardware. VantageCloud Lake represented the more radical leap, moving to an object storage-centric architecture where data lived in services like Amazon S3 or Azure Blob Storage, with elastic compute clusters that could be spun up and down independently.

The multi-cloud bet was deliberate and differentiated. While Snowflake also supported multiple clouds, and Databricks was building toward multi-cloud, Teradata positioned itself as the only enterprise analytics platform available natively on all three major hyperscalers with full workload portability.

The pitch to large enterprises was compelling in its simplicity: you should not be locked into a single cloud vendor, and Teradata is the Switzerland of cloud analytics. For a global bank operating across regulatory jurisdictions, or a multinational retailer with data sovereignty requirements in multiple countries, this message resonated.

Internally, the transformation was as painful as any McKinsey case study would predict. The sales organization had to be retrained from selling multi-million-dollar appliance deals to selling consumption-based cloud subscriptions. Compensation structures changed. Legacy products were retired. Engineers accustomed to designing for purpose-built hardware had to learn containerization, Kubernetes, and cloud-native development patterns. The company had to maintain its existing on-premises customers — still the majority of revenue — while simultaneously building and selling an entirely new cloud product line.

The financial model underwent a fundamental overhaul. Teradata introduced Annual Recurring Revenue as its north star metric, replacing the traditional emphasis on total revenue. This was a deliberate reframing — total revenue would inevitably decline as perpetual license deals evaporated, but ARR would capture the health of the subscription and cloud business that represented the company's future. Cloud ARR became the most closely watched metric on every earnings call.

The J-curve was exactly as brutal as expected. Revenue fell from $1.84 billion in 2020 to $1.80 billion in 2022. On-premises perpetual license revenue cratered. But cloud ARR was growing — and growing fast. The company reported cloud ARR of approximately $282 million at the end of 2022, up significantly from near zero just a few years earlier. It was not yet enough to offset the on-premises decline, but the trajectory was unmistakable.

COVID-19, paradoxically, helped. The pandemic accelerated every enterprise's digital transformation timeline. Companies that had planned five-year cloud migrations compressed them into two years. Teradata's existing customers, suddenly facing the reality that on-premises data centers were harder to manage with a remote workforce, became more receptive to cloud migration conversations.

The managed cloud offering — VantageCloud Enterprise — provided a lower-risk entry point: same Teradata workloads, same performance, but running on cloud infrastructure rather than customer-owned hardware. For a risk-averse CIO at a major bank, this was an appealing proposition: get the operational benefits of cloud (no hardware to manage, elastic scaling, reduced data center footprint) without the risk of a full platform migration to an unfamiliar system like Snowflake or Databricks.

Customer wins during this period provided critical proof points. Large financial institutions that had been Teradata customers for decades began migrating their Teradata workloads to VantageCloud rather than ripping them out entirely.

This was the key strategic insight: for many of Teradata's largest customers, the cost and risk of migrating away from Teradata entirely was higher than the cost of migrating Teradata itself to the cloud. The switching costs that had been Teradata's moat in the on-premises era were now serving as a cloud migration moat — keeping customers within the Teradata ecosystem even as they moved to new infrastructure. It was an elegant judo move: using the very lock-in that critics decried as legacy baggage to fuel the cloud transition.

The go-to-market transformation was equally significant. In the appliance era, Teradata's sales cycle was a multi-month, multi-million-dollar event involving senior executives on both sides, extensive proof-of-concept testing, and lengthy procurement negotiations. In the cloud era, the company needed a different motion: land-and-expand. Get a customer started on VantageCloud with a smaller initial commitment, prove value quickly, and expand the footprint over time. This required not just new compensation structures but a fundamentally different mindset among the sales force — selling outcomes and consumption rather than boxes and perpetual licenses.

The company also began investing in cloud marketplace presence, making VantageCloud available for purchase through the AWS Marketplace, Azure Marketplace, and Google Cloud Marketplace. This was strategically important because many large enterprises had committed cloud spending budgets with their hyperscaler partners. If a customer could apply their existing AWS spend commitment toward a Teradata subscription, it lowered the procurement friction dramatically. It also gave Teradata access to the hyperscalers' sales teams, who could co-sell VantageCloud to their own customers.

By mid-2022, the transformation was showing enough momentum to generate cautious optimism. Cloud ARR was growing, new product capabilities were earning analyst recognition, and the customer base was stabilizing. The multi-cloud strategy was resonating with enterprise procurement teams that were increasingly wary of single-vendor lock-in. McMillan had brought something the company desperately needed: consistent, disciplined execution without dramatic strategy reversals.

VIII. Leadership Change and Recent Trajectory (2022–Present)

Steve McMillan's continued tenure as CEO — now approaching six years — is itself a notable achievement for a company that burned through three leaders in less than four years. Stability matters in enterprise technology, where customers make multi-year platform commitments based partly on confidence that the vendor's strategic direction will remain consistent.

Under McMillan, Teradata has adopted what might be called a "profitable cloud growth" strategy — a deliberate contrast with the growth-at-all-costs approach of competitors like Snowflake and Databricks. The positioning has evolved from "data warehouse in the cloud" to "Connected Multi-Cloud Data Platform," and most recently to "Autonomous AI and Knowledge Platform." Each evolution reflects an attempt to escape the shrinking box of traditional data warehousing and position Teradata for adjacent growth opportunities.

The competitive landscape has continued to intensify, and understanding the specific nature of that competition is essential for anyone evaluating Teradata's prospects. Snowflake, which went public in September 2020 at a valuation that briefly exceeded $120 billion, has settled into a position of approximately $5 billion in annual recurring revenue. Databricks, still private, has reached a comparable ARR level with a reported valuation exceeding $60 billion. Both companies are growing significantly faster than Teradata and have captured the mindshare of the developer and data engineering communities — the people who, increasingly, influence which platforms enterprises adopt. Amazon Redshift, Google BigQuery, and Microsoft Azure Synapse benefit from being bundled into their respective hyperscaler ecosystems, making them default choices for cloud-native workloads.

The competitive dynamic is not just about product features — it is about ecosystem gravity. Snowflake has built a data marketplace where companies can share and monetize data assets. Databricks has assembled a formidable open-source ecosystem around Apache Spark, Delta Lake, and MLflow. The hyperscalers have the ultimate advantage of being both the infrastructure layer and the analytics layer, allowing them to offer tightly integrated solutions at marginal cost. Teradata, by contrast, sits on top of the hyperscalers' infrastructure as a third-party application — a position that adds cost without adding the ecosystem benefits that competitors provide.

Teradata's differentiation argument centers on three claims. First, that for the most complex, large-scale analytical workloads — thousands of concurrent queries of varying complexity — Teradata's workload management capabilities remain superior. Second, that genuine multi-cloud portability matters to large enterprises seeking to avoid hyperscaler lock-in. Third, that the company's deep integration with enterprise governance, security, and compliance frameworks makes it the trusted choice for regulated industries.

The financial trajectory tells a story of a company in transition — stable enough to survive, but not yet growing enough to thrive. FY2025 total revenue was $1.663 billion, down five percent from the prior year. But Cloud ARR reached $701 million, up fifteen percent, and now represents forty-six percent of total ARR. Total ARR was $1.522 billion, up three percent. Free cash flow was $285 million, above the high end of guidance. The company repurchased approximately $140 million of its own stock during the year, ending with $493 million in cash.

The Q4 2025 earnings report, released on February 10, 2026, was a genuine inflection point. Revenue of $421 million beat estimates by five percent. Non-GAAP earnings per share of $0.74 crushed consensus estimates of $0.47 by fifty-seven percent. Non-GAAP operating margins expanded to 22.8 percent, up from 17.6 percent a year earlier. The results triggered a stock surge of more than forty percent in a single session.

The same day brought two additional catalysts. Teradata announced a cooperation agreement with activist investor Lynrock Lake, which holds a 9.9 percent stake. The agreement expands the board from nine to ten directors, with Lynrock Lake gaining input into board composition. And just weeks earlier, Teradata had settled its years-long litigation against SAP for $480 million in cash — netting the company approximately $355 to $362 million after legal costs, before taxes.

On the product front, Teradata has invested aggressively in AI capabilities. The company launched an Enterprise Vector Store, an AI Factory for on-premises deployment, enhanced ClearScape Analytics with ModelOps capabilities for agentic and generative AI, and introduced AgentBuilder for prebuilt enterprise AI agents. McMillan has framed AI as the key to reopening Teradata's total addressable market, telling analysts on the Q4 call: "The whole AI marketplace for us is opening up a new TAM." The company reported more than 150 enterprise AI engagements in 2025, and POC activity doubled year-over-year.

The SAP litigation settlement deserves separate attention because it is material both financially and strategically. Teradata had sued SAP in 2018, alleging that SAP misappropriated Teradata's trade secrets through a joint venture and violated antitrust laws by conditioning software sales on the use of SAP's own database products. After years of litigation that reached the U.S. Supreme Court on the antitrust claims, the parties settled in February 2026 for $480 million in cash. After contingent fees and legal costs, Teradata expects to net approximately $355 to $362 million before taxes. This is a significant windfall for a company with a market capitalization of roughly $2.8 billion — effectively adding more than twelve percent of market cap in cash. How management deploys this capital — buybacks, debt reduction, R&D investment, or acquisitions — will be a revealing strategic signal.

The securities fraud class action filed in June 2024 represents a separate legal overhang. The suit covers the period from February 2023 through February 2024 and alleges that Teradata and senior executives made materially misleading statements about the company's pipeline and growth prospects. The catalyst was the February 13, 2024 earnings miss that sent the stock down twenty-two percent in a single day. This litigation appears to be ongoing and represents a potential financial and reputational risk that investors should monitor.

FY2026 guidance calls for total ARR growth of two to four percent, total revenue between negative two percent and flat, and free cash flow of $310 to $330 million. The non-GAAP EPS guidance of $2.55 to $2.65 implies continued margin expansion. It is a guidance profile that says: we are not yet growing the top line, but we are managing the business for profitability and cash generation while the cloud transition matures.

The unanswered question is whether this is enough. Teradata's cloud net expansion rate — a measure of how much existing cloud customers expand their spending — has declined from approximately 123 percent in early 2024 to 108 percent by Q4 2025. That is still above 100 percent, meaning customers are growing their Teradata cloud spending, but the deceleration is concerning. If net expansion rate falls below 100 percent, it would signal that even existing cloud customers are contracting their Teradata usage — a fundamentally different and more troubling dynamic.

IX. The Technology Deep Dive: Why Teradata (Still) Matters

To understand why some of the world's largest companies still rely on Teradata — despite a decade of cloud-native alternatives — requires understanding what Teradata actually does differently under the hood. The technical advantages are real, even if they matter for a narrowing set of use cases.

Start with the query optimizer. When you send a question to a database — say, "What were total sales by region, product category, and customer segment for the past five years?" — the database needs to figure out the most efficient way to retrieve that answer. This is like a GPS navigation system: there are thousands of possible routes, and the optimizer needs to find the best one, fast. Teradata's Adaptive Optimizer is distinctive because it does not plan the entire route at once. Instead, it builds the plan incrementally, executing each segment and using the actual results from that segment to improve planning for the next. Think of it as a GPS that recalculates after every turn based on what it learned about traffic conditions, rather than committing to a fixed route before you leave the driveway.

Then there is workload management. Teradata's Integrated Workload Management system, known as TASM, provides an extraordinarily granular control over how computing resources are allocated across different types of work. In practical terms, this means a bank can run a high-priority fraud detection query (needs to finish in seconds), a medium-priority regulatory reporting batch job (needs to finish within hours), and a low-priority ad hoc exploration query from a data analyst (can take as long as it takes) — all on the same system, simultaneously, with guaranteed service levels for each. The system classifies workloads, sets priorities, applies throttles to prevent any single query from consuming excessive resources, and manages queues automatically.

This matters because in the real world, enterprise analytics systems do not just run one type of query. They run thousands of concurrent queries of wildly different complexity, priority, and resource requirements. This is the scenario where Teradata consistently outperforms cloud-native alternatives. According to Teradata's own benchmarks — which should be taken with appropriate skepticism — VantageCloud Lake handled sixty-two times more queries than Snowflake in the same time frame, and eight times more than Databricks at twelve times lower cost per query. Independent benchmarks would likely show narrower advantages, but the directional point holds: for complex, mixed-workload environments at scale, Teradata has genuine technical differentiation.

The architectural evolution from proprietary hardware to cloud-native has been remarkable. The original Teradata systems used purpose-built hardware with a custom BYNET networking fabric. VantageCloud Enterprise preserved the traditional architecture but replaced physical nodes with cloud virtual machines — same engine, different infrastructure. VantageCloud Lake went further, moving to an object storage foundation (S3, Azure Blob, Google Cloud Storage) with elastic compute clusters and native support for open table formats like Apache Iceberg and Delta Lake.

ClearScape Analytics, Teradata's integrated analytics engine, provides over 150 built-in analytical functions that execute directly within the database. This is significant because it eliminates what data engineers call the "data movement penalty" — the time and cost of extracting data from a warehouse, moving it to an external machine learning platform, running models, and moving results back. For organizations with petabyte-scale data, this can mean the difference between an analysis that takes minutes and one that takes hours.

In 2025, ClearScape was enhanced with ModelOps capabilities for agentic and generative AI, including native support for open-source ONNX embedding models and integration with cloud LLM APIs from Azure OpenAI, Amazon Bedrock, and Google Gemini. The Enterprise Vector Store — launched the same year — provides a foundation for retrieval-augmented generation, enabling enterprise AI agents to query vast knowledge bases with Teradata's characteristic performance and governance.

The multi-cloud portability story deserves careful scrutiny because it is central to Teradata's competitive pitch. The argument goes like this: large enterprises increasingly operate across multiple cloud providers. A global bank might use AWS in the United States, Azure in Europe (for Microsoft integration with existing Office 365 deployments), and Google Cloud in Asia Pacific (for AI and machine learning capabilities). If their analytics platform can only run on one cloud, they either accept lock-in or maintain separate analytical environments — an operational nightmare. Teradata's ability to run the same workloads, with the same data models and the same performance characteristics, across all three hyperscalers is genuinely differentiated. Snowflake also supports multi-cloud, but Teradata's pitch emphasizes workload portability — the ability to move a running analytical workload from one cloud to another without rewriting queries or reconfiguring data pipelines.

How much this matters in practice is debatable. Most enterprises have not yet reached the level of multi-cloud sophistication where workload portability is a daily concern. But as data sovereignty regulations tighten — the EU's evolving data governance requirements, for instance — the ability to run identical analytics in different jurisdictions on different clouds may become increasingly valuable.

The services moat deserves mention as well. Teradata's professional services organization — representing roughly sixteen percent of revenue — deploys consultants who often spend years embedded within client organizations. These consultants accumulate deep knowledge of each customer's data models, business processes, and analytical workflows. While competitors partner with large systems integrators like Accenture and Deloitte, Teradata's in-house expertise for its own platform is difficult to replicate. The irony, of course, is that some of these same systems integrators now offer Teradata-to-Snowflake migration services.

X. Business Model & Unit Economics

The most important thing to understand about Teradata's current financial profile is that you are looking at a company in the middle of a business model transition. The overall revenue number — $1.663 billion in FY2025, declining — is misleading if taken at face value. What matters is the composition of that revenue and where each piece is heading.

Total Annual Recurring Revenue stood at $1.522 billion at the end of FY2025, up three percent. This is the revenue base that management considers sustainable — subscriptions, cloud consumption, and maintenance contracts. Within that, Cloud ARR of $701 million is the growth engine, up fifteen percent and now accounting for forty-six percent of total ARR. The remainder is on-premises subscription and legacy maintenance revenue, which is declining as customers either migrate to the cloud or, in some cases, migrate away from Teradata entirely.

The revenue that is disappearing most rapidly is perpetual license revenue and upfront hardware sales — the high-margin transactions that powered the golden age. In a perpetual license model, a customer paid millions upfront, and the vendor recognized revenue immediately. In a subscription model, that same revenue gets recognized ratably over the contract term. The transition from perpetual to subscription inherently depresses near-term revenue even when the underlying customer relationship is healthy. This is the J-curve effect, and Teradata has been living through it for nearly a decade.

Gross margins tell an interesting story. Hardware sales carried high gross margins but are now a minimal part of the business. Cloud delivery carries different economics — Teradata pays hyperscalers for infrastructure and earns a margin on top — but at scale, cloud gross margins have been improving as the company gains leverage on its cloud operations. Non-GAAP operating margins expanded to 22.8 percent in Q4 2025, a meaningful improvement from 17.6 percent a year earlier, suggesting that the cost restructuring is working.

Customer concentration is manageable but concentrated in large enterprises. No single customer represents ten percent or more of total revenue, but the customer base is heavily weighted toward Fortune 500 companies. Teradata reported approximately one thousand large enterprise customers, including fifteen of the top twenty global banks. The average contract value for cloud deals tends to be significant — management has noted that some customers are moving from single eight-figure transformational deals to multiple seven-figure phased deals, a behavioral shift that may slow ARR growth but reduce deployment risk.

The partner ecosystem is evolving. Cloud marketplace transactions through AWS, Azure, and Google Cloud are growing, giving customers the ability to purchase Teradata through existing cloud spending commitments. Systems integrators remain important for implementation, migration, and optimization. NVIDIA was named 2025 Partner of the Year, reflecting Teradata's investment in AI capabilities, while Microsoft and Dell also received partner recognition.

The sales cycle in the cloud era looks fundamentally different from the appliance era. In the old world, a single Teradata deal might involve eighteen months of engagement, a proof-of-concept deployment, executive-level negotiations, and a multi-million-dollar purchase order. In the cloud world, the initial landing can be smaller — a customer might start with a VantageCloud deployment for a single use case or department, prove value in weeks rather than months, and then expand. This land-and-expand motion is more efficient in some ways but also reduces the size of initial deals and requires a different kind of sales capability — relationship management and customer success rather than transactional selling.

The accounting treatment of cloud transitions also creates complexity for investors trying to evaluate Teradata's financial health. When an on-premises customer migrates to VantageCloud, the perpetual license and hardware revenue they previously generated disappears from the income statement. The new cloud subscription revenue replaces it — but often at a lower initial annual value because the customer is paying ratably rather than upfront, and because cloud pricing tends to be more competitive than on-premises pricing was. Over a three-to-five-year contract, the total value may be comparable, but the near-term revenue impact is negative. This dynamic will continue to compress reported revenue for as long as the migration of on-premises customers to cloud continues.

Free cash flow of $285 million in FY2025 — with guidance for $310 to $330 million in FY2026 — is perhaps the most reassuring metric for investors. It demonstrates that even while revenue declines, the business generates substantial cash. The company used approximately $140 million for share repurchases in FY2025 and announced a new $500 million buyback authorization in 2026, signaling confidence in the durability of cash generation.

XI. Porter's 5 Forces & Hamilton's 7 Powers Analysis

Competitive Rivalry: Intense and Asymmetric

The cloud data analytics market is one of the most intensely competitive arenas in enterprise technology. Snowflake and Databricks, each at approximately $5 billion in ARR, dominate the narrative and the growth trajectory. AWS Redshift, Google BigQuery, and Microsoft Azure Synapse benefit from hyperscaler bundling — customers using AWS for infrastructure often default to Redshift simply because it is there, pre-integrated, and covered under existing spending commitments. Teradata's $1.5 billion total ARR makes it a mid-sized player defending a specific niche: the most complex, large-scale, mixed-workload analytical environments at the world's largest enterprises. The rivalry is asymmetric because Teradata is not competing for the same net-new cloud-native customers as Snowflake — it is fighting to retain and expand its existing installed base while competitors attempt to dislodge those same customers.

Threat of New Entrants: Elevated

Cloud infrastructure has dramatically lowered the barriers to building analytical databases. Open-source foundations like Apache Iceberg, Delta Lake, and Trino enable new entrants to assemble competitive capabilities without starting from scratch. Emerging players like ClickHouse, Firebolt, and StarRocks demonstrate ongoing new entry. However, achieving enterprise-grade reliability, earning security certifications (SOC 2, HIPAA, FedRAMP), and building a sales organization capable of penetrating Fortune 500 IT departments remain substantial barriers that protect incumbents from the most casual new entrants.

Bargaining Power of Suppliers: Moderate and Conflicted

Teradata's most important suppliers are also its most important competitors: the hyperscale cloud providers. AWS, Azure, and Google Cloud provide the infrastructure on which VantageCloud runs. Each of them also operates a competing data warehousing service. This creates a "coopetition" dynamic where Teradata is simultaneously a customer, partner, and competitor. Teradata's multi-cloud support — the ability to run on all three hyperscalers — provides some negotiating leverage, as no single cloud provider can hold Teradata hostage. But the fundamental tension is real and enduring.

Bargaining Power of Buyers: High and Increasing

Enterprise buyers have more leverage than at any point in Teradata's history. The number of viable alternatives for analytical workloads has proliferated dramatically. Open table formats (Iceberg, Delta Lake) reduce data lock-in by allowing data to be queried by multiple engines — a customer's data stored in Iceberg format can be read by Teradata, Snowflake, Databricks, or Trino interchangeably, eliminating the proprietary data format lock-in that once made migration so painful. Migration tools from Databricks (Lakebridge) and Snowflake (SnowConvert) lower the cost and complexity of converting Teradata-specific SQL and scripts to target platforms. Procurement teams increasingly demand multi-vendor strategies and competitive bake-offs that pit Teradata directly against cloud-native alternatives on cost and performance. Every Teradata renewal is, to some degree, a retention battle — and the customer knows it.

Threat of Substitutes: Very High

The data lakehouse paradigm — pioneered by Databricks and now embraced by virtually every vendor — represents the most significant substitute threat. To understand why, consider what a lakehouse does. A traditional data warehouse stores structured data in a proprietary format optimized for fast queries. A data lake stores raw data — structured, semi-structured, and unstructured — in cheap object storage like Amazon S3. The lakehouse combines both: it stores data cheaply in object storage (like a lake) but adds a reliability and performance layer (like a warehouse) that enables SQL queries, ACID transactions, and machine learning workflows on the same data. The result is a single platform that can serve both data engineers building pipelines and business analysts running reports, without the cost and complexity of maintaining separate warehouse and lake systems.

This is a direct challenge to Teradata's core value proposition. If a lakehouse can handle the same analytical workloads at lower cost with greater flexibility, the rationale for a dedicated data warehouse weakens. The lakehouse market is projected to grow from roughly $11 billion in 2024 to over $42 billion by 2031. Teradata has responded by embracing open table formats like Apache Iceberg and Delta Lake and positioning VantageCloud Lake as a lakehouse-compatible platform, but it is adapting to a paradigm invented by its competitors rather than defining it.

Additionally, the rise of composable data architectures — where organizations assemble best-of-breed tools for each function (ingestion, storage, transformation, analytics, machine learning) rather than buying a single integrated platform — further fragments the market. Tools like dbt for transformation, Fivetran for ingestion, and open-source query engines like Trino allow enterprises to build analytical stacks without any traditional data warehouse vendor. This "unbundling" trend represents yet another vector of substitution pressure on Teradata.

Hamilton's 7 Powers Applied to Teradata

The most useful framework for understanding Teradata's competitive durability comes from Hamilton Helmer's "7 Powers." Consider each:

Switching Costs remain Teradata's most significant power. Migration away from Teradata typically costs between $20 million and $30 million for a mid-range system, involving SQL dialect translation, rewriting of proprietary BTEQ scripts and FastLoad utilities, rebuilding of years of performance tuning, and revalidation of mission-critical analytical pipelines. System integrators have been known to charge up to ten times a customer's annual Teradata spend for a full migration engagement. However, this power is eroding as automated translation tools improve and as LLMs are applied to code migration tasks.

Process Power — the embedded organizational capability to deliver complex enterprise analytics implementations — is genuine. Teradata's four decades of experience deploying, tuning, and optimizing large-scale analytical systems at the world's most demanding organizations is difficult to replicate quickly. But process power requires a growing market to be durable, and if the market for traditional data warehousing shrinks, expertise in a declining domain loses value.

Branding is a double-edged sword. Among enterprise data professionals in financial services, telecom, and retail, Teradata conveys reliability and scale. But among cloud-native developers and data engineers — the decision-influencers of the future — the brand connotes legacy, expensive, and proprietary. Gartner's classification of Teradata as a "Visionary" rather than a "Leader" in its 2025 Magic Quadrant for Cloud Database Management Systems captures this perception gap. Notably, Forrester took a more favorable view, naming Teradata a Leader in its 2025 Wave for Data Management for Analytics Platforms with the highest score possible in the Vision criterion.

Scale Economies, Network Effects, and Counter-Positioning are largely absent. Teradata is smaller than its primary competitors, does not benefit from platform network effects, and has moved from counter-positioning (the trusted enterprise platform versus immature cloud upstarts) to competing on the same cloud turf.

Cornered Resource is modest. Teradata possesses significant intellectual property in query optimization, workload management, and parallel processing. However, this IP is not truly cornered — competitors have invested billions in building comparable capabilities, and the shift to open-source foundations (Apache Iceberg, Delta Lake) commoditizes what was once proprietary. The closest thing to a genuine cornered resource is Teradata's four decades of enterprise data model templates and industry-specific accelerators — pre-built analytical frameworks for banking, telecom, retail, and healthcare that encode hard-won domain knowledge. These are difficult to replicate quickly but are not sufficient on their own to sustain competitive advantage.

The verdict is sobering but not terminal. Teradata's moat has narrowed dramatically from the impregnable fortress of the golden age to a defensible but fragile position built primarily on switching costs and process expertise within a specific niche of the market. The strategic question is whether that niche is large enough and durable enough to sustain a multi-billion-dollar business.

Myth vs. Reality: Checking the Consensus Narratives

Several consensus narratives about Teradata deserve fact-checking. The first myth is that "Teradata is a legacy company that no one uses anymore." The reality is that roughly a thousand large enterprises still run mission-critical analytics on Teradata, and the company was named a Leader in Forrester's 2025 Wave for Data Management for Analytics Platforms. It scored above 4.0 across all three analytical use cases in the Gartner evaluation. The technology is genuinely respected by the analysts who evaluate it most rigorously. The second myth is that "cloud migration from Teradata is easy." The reality is that a typical mid-range migration still costs between twenty and thirty million dollars and takes years. Automated translation tools help, but the complexity of converting Teradata-specific SQL dialects, BTEQ scripts, and workload management configurations remains substantial. The third myth is that "Teradata's cloud product is just a lift-and-shift of the old platform." VantageCloud Lake, which moved to an object storage-native architecture with elastic compute, is a genuinely cloud-native redesign, not merely a virtual machine running the old appliance software. Whether it is competitive enough to win new customers is debatable, but dismissing it as legacy technology in a cloud wrapper does not match the technical reality.

XII. Bull vs. Bear Case

The Bull Case

The optimist's view of Teradata starts with the math of the installed base. Roughly a thousand large enterprises — including fifteen of the top twenty global banks — still run mission-critical analytics on Teradata. The switching cost analysis is straightforward: a $20 to $30 million migration cost, plus years of risk, plus institutional knowledge loss, versus renewing with Teradata and migrating to VantageCloud. For risk-averse enterprises in regulated industries, the calculation often favors staying. Cloud ARR growth of fifteen percent, while decelerating, validates that customers are choosing to move their Teradata workloads to the cloud rather than ripping them out.

Multi-cloud portability is a genuine differentiator. As enterprises increasingly distribute workloads across multiple cloud providers — driven by data sovereignty regulations, cost optimization, and vendor diversification strategies — Teradata's ability to run natively on all three hyperscalers with workload portability becomes more valuable, not less. Snowflake also offers multi-cloud support, but Teradata's pitch to the CIO is: we have been managing your most complex analytics for twenty years, and now we can do it on any cloud you choose.

The AI angle deserves serious consideration. Teradata's argument is that AI workloads — particularly agentic AI systems that need to reason across vast enterprise datasets — require exactly the kind of governed, high-performance, multi-source data platform that Teradata has been building for decades. The Enterprise Vector Store, ClearScape Analytics ModelOps, and on-premises AI capabilities (next-generation hardware with built-in GPUs) position Teradata to serve enterprises that cannot or will not send sensitive data to public cloud AI services. McMillan doubled POC activity in 2025, suggesting this resonates with customers. If even a fraction of the more than 150 AI engagements reported in 2025 convert to expanded production deployments, it could meaningfully accelerate cloud ARR growth.

The financial profile is underappreciated. Free cash flow of $285 million on a roughly $2.8 billion market cap represents a roughly ten percent free cash flow yield, with guidance for improvement. The $480 million SAP settlement adds a one-time cash infusion. The $500 million buyback authorization provides downside support. At current valuations, the stock prices in continued decline rather than stabilization. Any re-acceleration of growth could drive a significant re-rating.

The Bear Case

The pessimist's view begins with the revenue trajectory: $2.73 billion in 2014, $1.66 billion in 2025, with guidance for flat to down in 2026. That is a forty percent decline over eleven years with no clear inflection toward growth. Cloud ARR is growing, but total revenue keeps falling because on-premises declines outpace cloud gains. The net expansion rate for cloud customers has decelerated from 123 percent to 108 percent over the past year — not contracting yet, but the trend is unfavorable. If cloud expansion does not accelerate, total ARR growth will eventually turn negative.

The competitive narrative has been lost. Snowflake and Databricks dominate the conversation among data professionals, at industry conferences, and in the technology press. Teradata is rarely mentioned in the same breath. In enterprise technology, narrative matters because it shapes where talent goes, where startups build integrations, and which platforms young engineers learn in school. Teradata's brand perception among the next generation of data professionals is poor, and this has compounding long-term consequences for new logo acquisition.

The total addressable market for Teradata's core use case — traditional enterprise data warehousing — is shrinking as the lakehouse paradigm, open-source tools, and composable data architectures chip away at the workloads that once required a dedicated data warehouse. Teradata is fishing in a pond that is getting smaller, even as it gets better at fishing. The AI expansion thesis is unproven: the enterprise AI market is highly competitive, with hyperscalers, Databricks, and purpose-built AI platforms all vying for the same budgets.

Management execution risk is real. Three CEOs in four years between 2016 and 2020 created organizational whiplash. McMillan has provided stability, but the ongoing 2024-2026 restructuring — including a 9 to 10 percent workforce reduction and a voluntary separation program — suggests the company is still right-sizing for a smaller business, not preparing for growth. The securities fraud class action filed in June 2024, covering the period from February 2023 through February 2024 and alleging materially misleading statements about pipeline and growth prospects, represents a legal overhang.

Perhaps most fundamentally, the bear case asks whether Teradata can ever grow again. A company generating strong free cash flow from a slowly declining revenue base is not worthless — but it is a very different investment thesis from a turnaround or growth story. The risk is that the stock is a "value trap" — cheap on current cash flows, but appropriately cheap because those cash flows will decline over time as customers eventually complete their migrations away from the platform.

The KPIs That Matter

For investors tracking Teradata's evolution, two metrics stand above all others.

First, Public Cloud ARR growth rate. This is the single most important indicator of whether the cloud transformation is succeeding. The current fifteen percent growth rate is the barometer — acceleration would validate the strategy, sustained deceleration would confirm the bear case.

Second, Cloud net expansion rate. This measures whether existing cloud customers are growing their Teradata spend over time. At 108 percent, it indicates net expansion, but the declining trend from 123 percent needs to stabilize or reverse. If this metric falls below 100 percent, it signals that even cloud-migrated customers are shrinking their Teradata usage, which would fundamentally undermine the bull thesis.

XIII. Playbook: Lessons for Founders & Investors

Teradata's four-decade journey offers a textbook's worth of strategic lessons, many of them painful.

The Innovator's Dilemma, Lived in Real Time. Clayton Christensen could have written another chapter about Teradata. The company invented enterprise data warehousing, dominated it for two decades, and then watched as cloud-native upstarts built simpler, cheaper, good-enough alternatives that started at the low end of the market and moved relentlessly upward. The classic pattern played out with almost textbook precision: Teradata's best customers told it they needed on-premises performance, Teradata listened to those customers, and by the time those same customers changed their minds about cloud, competitors had a multi-year head start. Protecting the golden goose of on-premises revenue delayed the cloud pivot by years — arguably the most expensive strategic mistake in the company's history.

Business Model Transitions Are More Painful Than Anyone Admits. The shift from perpetual licenses to subscription pricing is something every enterprise software company has navigated or is navigating. Teradata's experience reveals how brutal the J-curve can be when you start late. Revenue declined for seven of the eight years between 2014 and 2022 — not because the company was losing customers (though some left), but because the economic shape of each deal changed. A perpetual license deal might generate $5 million in upfront revenue; the equivalent subscription deal generates $5 million over three to five years. Multiply that across hundreds of customers transitioning simultaneously, and you get a multi-year revenue compression that tests the patience of investors, employees, and the board.

Moats Require Constant Reinvestment. Teradata's moat in 2005 was one of the strongest in enterprise technology: proprietary hardware, specialized software, deep professional services, and astronomical switching costs. Fifteen years later, each layer of that moat had eroded. Hardware became commoditized through cloud. Software differentiation narrowed as competitors invested in query optimization. Professional services were matched by systems integrators working for competitors. Switching costs remained significant but were being systematically attacked by migration tools. The lesson is that a moat is not a wall you build once — it is a garden you tend constantly. Every year of underinvestment in competitive differentiation was a year of moat erosion.

The Cloud Timing Trap. There is an instructive comparison between Oracle and Teradata on cloud timing. Oracle, under Larry Ellison, moved to cloud earlier but did so in a way that many customers perceived as forced migration — aggressive licensing changes that pushed customers toward Oracle Cloud whether they wanted it or not. The result was customer resentment and defection. Teradata, by contrast, moved too late and too timidly, giving customers time to discover alternatives before Teradata had a credible cloud offering. Neither approach was optimal. The lesson is that cloud transitions require a Goldilocks balance: early enough to be credible, customer-centric enough to retain trust, and aggressive enough to build momentum before competitors establish themselves. Getting that balance wrong in either direction is enormously costly.

Capital Allocation Matters Enormously. Consider the Aprimo acquisition: $525 million spent in 2010 on a marketing applications company that was sold six years later for roughly $90 million. That $525 million, invested instead in cloud infrastructure and engineering, might have given Teradata a two-to-three-year head start on its cloud transition. Capital allocation is not just about what you buy; it is about the opportunity cost of what you do not build with those same dollars. Teradata also spent heavily on share buybacks during the golden years — capital returned to shareholders at peak valuation rather than invested in the cloud future that was visibly approaching. In hindsight, those buybacks were among the most expensive share repurchases in enterprise technology history.

Culture Is Strategy. Teradata was a hardware company. Its engineers built physical appliances. Its salespeople sold boxes. Its services teams installed on-premises systems. Transforming that DNA into a cloud-first, software-defined culture required not just new products but new hiring, new incentives, new processes, and new leadership. The fact that it took three CEO changes to find the right leader for the cloud transformation (and that even then, the process has taken years) illustrates how deeply organizational culture resists strategic change.

Enterprise Trust Is a Double-Edged Sword. Teradata's greatest asset — the deep trust of Fortune 500 IT departments earned over decades of reliable service — was also its greatest liability. That trust made customers reluctant to leave, giving Teradata time to execute its cloud transition. But it also made Teradata complacent, slow to acknowledge competitive threats, and dependent on a customer base that was aging and shrinking. When your customers trust you enough to stay despite better alternatives existing, you can mistake loyalty for endorsement.